Projects

Predictive Coding and Active Inference in Neurorobotics

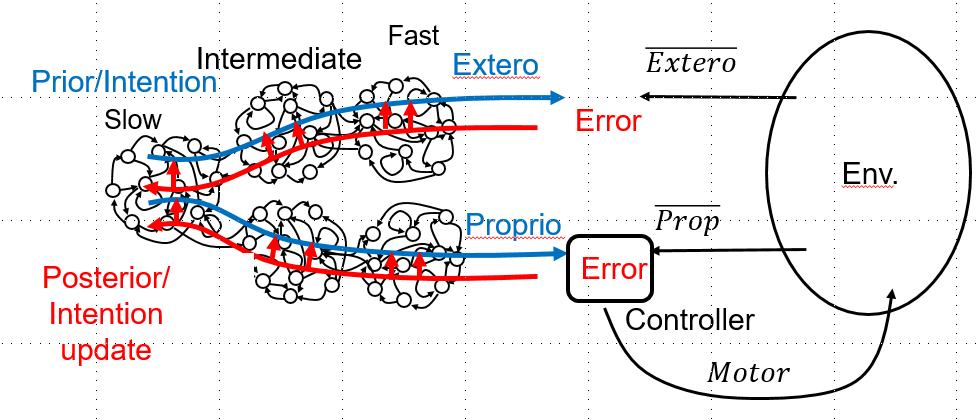

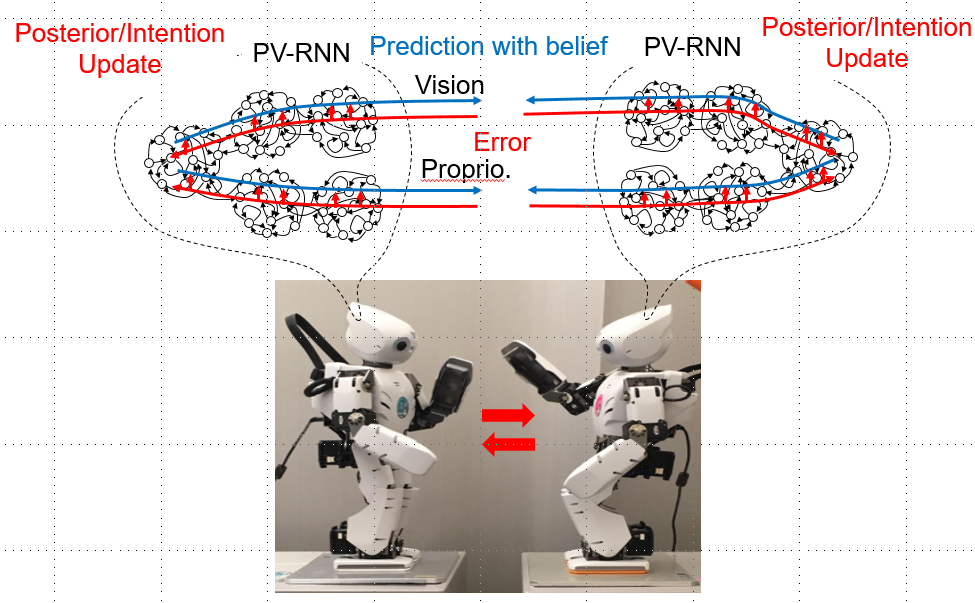

Our group has studied various rmodels analogous to the frameworks of predictive coding and active inference (Friston et al., 2011) by using recurrent neural network models applied to neurorobotics nearly for two decades (Tani & Nolfi, 1999; Tani, 2003). Recently, new extensions have been made, one for the associative and integrative learning of pixel video image and proprioception of humanoid robots (Hwang & Tani, 2018) and the other for a development of predictive-coding-inspired variational Bayes RNN model (PV-RNN) (Ahmadi & Tani, 2018). Our group continues to explore the idea of predictive coding and active inference using these models.

Social Cognitive Neuro-Robotics

This project aims to explore possible neuropsychological mechanisms for social cognition by conducting a set of experiments for robot-human as well as robot-robot interactions. Especially, we examine the underlying mechanisms accounting for spontaneous coupling and decoupling among agents as well as autonomous shifts from one social context to another. We investigate also how can novel or creative behaviors be co-developed by robots and humans through their interactions. Furthermore during such interaction in the human-in-the-robot-loop experiment, we examine how human can feel intention or free will of the robots or how the robots can do so for the humans. We study these issues by using the frameworks of predictive coding and active inference.

Hierarchical Reinforcement Learning in Continuous Space Domain for Life-Time Adaptation

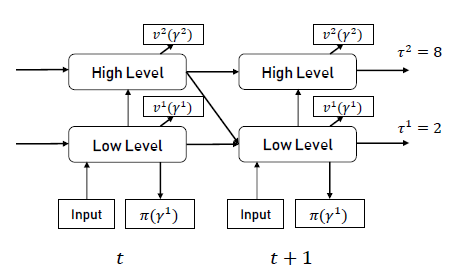

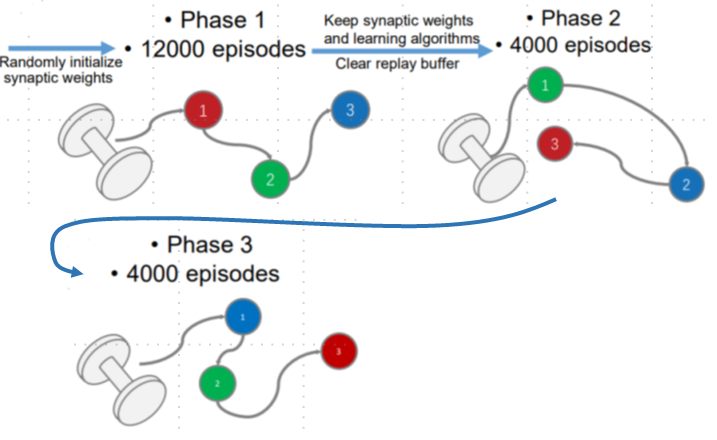

This study (Han & Tani, 2019) aims to achieve life-time learning by developing a hierarchical reinforcement learning model applied in continuous domains. The model is characterized by the multiple timescale property (Yamashita & Tani, 2008) with different values for neural activation dynamics time constant and discount factor at each layer of stacked RNNs. The model is also characterized by using stochastic dynamics not only in the motor outputs but also in all internal neral units for generating exploratory behaviors. Our simulation experiments on the sequential object reaching task showed that agents employing the model can learn the task by developing compositional information processing mechanism internally by taking advantage of the multiple timescale property introduced in the stacked RNN. It was also shown that the agents were able to adapt successfully to changes in goal setting in terms of the order in reaching different objects sequentially by utilizing the compositionality developed through relearning processes. Currently, we are investigating how the current model characterized by the model-free learning can incorporate also with the model-based learning by introducing sensory prediction capability in the proposed model.

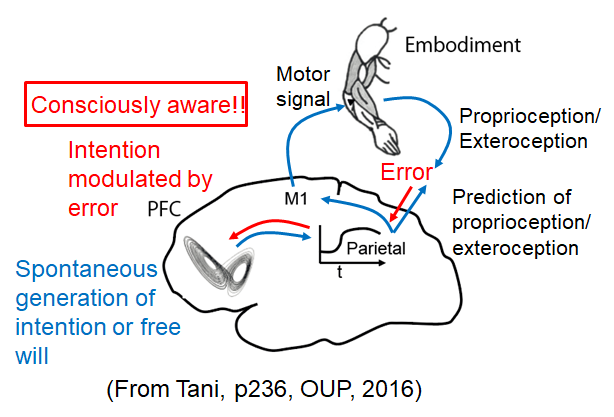

Self, Consciousness, and Free Will

We are working on toward integrative understanding of subjective experience related to the sense of self, consciousness, and free will by conducting synthetic neurorobotics study. One of our central arguments has been that the sense of self becomes consciously aware when gaps or conflicts appear between the top-down intention with proactive view about the world for acting it and the perceptual outcome of this acting (Tani, 1998). Every effort on minimizing this gap or prediction error should generate conscious experience about the self and the outer world as separated entities. On the other hand, unconscious sense of self should run as the background in terms of the sense of agency (Gallagher, 2000) when everything goes smoothly without conflicts (Tani, 2009, 2016). One of our ongoing studies is to investigate a possibility that the phenomenal consciousness can be accounted by functional aspects of the system in some degree.

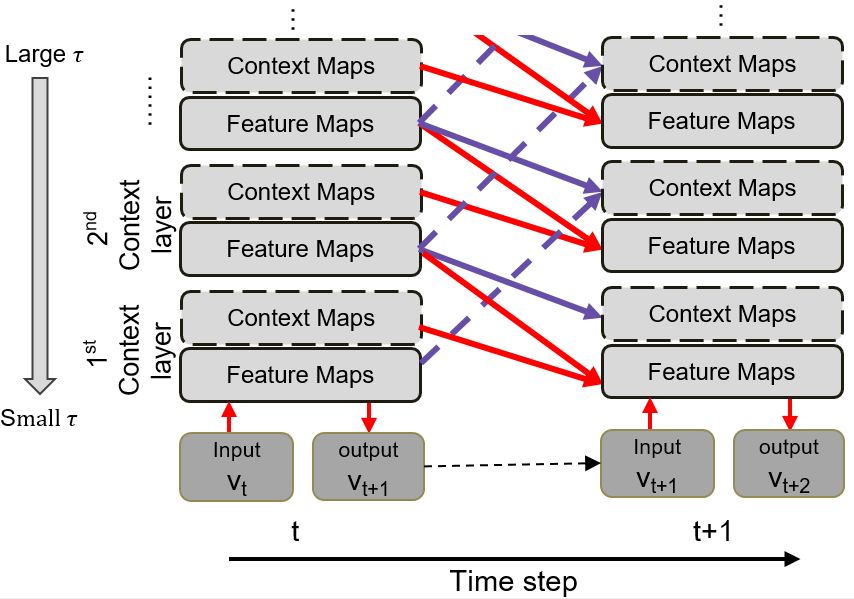

Predictive Coding for Dynamic Vision: Development of Functional Hierarchy in a Multiple Spatio-Temporal Scales RNN Model

This study investigates a novel recurrent neural network model, the predictive multiple spatio-temporal scales RNN (P-MSTRNN), which can generate as well as recognize dynamic visual patterns in the predictive coding framework (Choi et al., 2018). The model is characterized by multiple spatio-temporal scales imposed on neural unit dynamics through which an adequate spatio-temporal hierarchy develops via learning from exemplars.

Neuro-Robotics toward general Intelligence

This project aims to understand the essential brain mechanisms for the higher-order cognition by synthesizing through robotics experiments. We build so-called the large scale brain network (LSBN) model by utilizing available data for connectivity matrix among local regions in the human brain under collaboration with Prof. Daeshik Kim in KAIST. A challenge is to show that humanoid robots can adapt to a wide range of cognitive tasks by flexibly combining various cognitive resources developed in the LSBN. Here, the aim is not just to train robots to be good at a particular cognitive task, but to educate them to be good at various cognitive tasks simultaneously where general intelligence would be required. The project may start with focusing on the main cortical areas including the prefrontal cortex, the premotor cortex, the temporal cortex, the parietal cortex and the sensory peripheral regions. It is presumed that general intelligence would appear in adequate harmony among those brain regions under specific connectivity. The project will utilize iCub as a humanoid robot platform.