FY2022 Annual Report

Cognitive Neurorobotics Research Unit

Professor Jun Tani

Jochen, Jorge, Wataru, Jeff, Takazumi, Tomoe, Jun,

Ted, Hiroki, Nadine, Federico, Alex, Rui (04/28/2023)

Abstract

CNRU started in September 2017. Currently CNRU consists of 2 staff scientists, 2 postdoc researchers, 1 technical staff, 7 Ph.D. students, 1 rotation student, 1 visiting researcher, and one administrator. The group is investigating the essential mechanisms on human embodied cognition by using frameworks of predictive coding, active inference, and model-based reinforcement learning while conducting various robotics experiments.

1. Staff

As of March 31, 2023

- Prof. Jun Tani, Professor

- Dr. Takazumi Matsumoto, Staff Scientist

- Dr. Jeffrey Queißer, Staff Scientist

- Dr. Jorge Gallego Perez, Postdoctoral Scholar

- Dr. Fabien Benureau, Postdoctoral Scholar

- Dr. Federico Sangati, Technical Staff

- Ms. Nadine Wirkuttis, PhD Student

- Mr. Wataru Ohata, PhD Student

- Mr. Prasanna Vijayaraghavan, PhD Student

- Mr. Hiroki Sawada, PhD Student

- Mr. Alexander Baranski, PhD Student

- Mr. Theodore Jerome Tinker, PhD Student

- Mr. David Pere Tomas Cuesta, PhD Student

- Mr. Rui Fukushima, Rotation Student

- Dr. Jochen Steil, Visiting Scholar, Theoretical Sciences Visiting Program

- Dr. Jeffrey White, Visiting Researcher

- Ms. Tomoe Furuya, Research Unit Administrator

2. Collaborations

2.1 Computational models on reinforcement learning

- Description: Model studies on hierarchical, model-based, variational reinforcement learning.

- Type of collaboration: Joint research

- Researchers:

- Prof. Kenji Doya, Neural Computation Unit, OIST

2.2 Computational models on the sense of self

- Description: Model studies on the sense of primitive and narrative selves.

- Type of collaboration: Joint research

- Researchers:

- Prof. Jeff White, Twente University

3. Activities and Findings

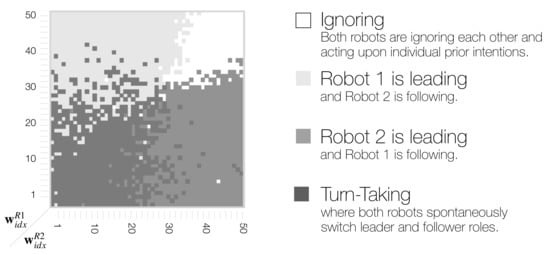

3.1 Turn-Taking Mechanisms in Imitative Interaction: Robotic Social Interaction Based on the Free Energy Principle

This study explains how the leader-follower relationship and turn-taking could develop

in a dyadic imitative interaction by conducting robotic simulation experiments based on the free energy principle. Our prior study showed that introducing a parameter during the model training phase can determine leader and follower roles for subsequent imitative interactions. The parameter is defined as w, the so-called meta-prior, and is a weighting factor used to regulate the complexity term versus the accuracy term when minimizing the free energy. This can be read as sensory attenuation, in which the robot’s prior beliefs about action are less sensitive to sensory evidence. The current extended study examines the possibility that the leader-follower relationship shifts depending on changes in w during the interaction phase. We identified a phase space structure with three distinct types of behavioral coordination using comprehensive simulation experiments with sweeps of w of both robots during the interaction. Ignoring behavior in which the robots follow their own intention was observed in the region in which both ws were set to large values. One robot leading, followed by the other robot was observed when one w was set larger and the other was set smaller. Spontaneous, random turn-taking between the leader and the follower was observed when both ws were set at smaller or intermediate values. Finally, we examined a case of slowly oscillating w in anti-phase between the two agents during the interaction. The simulation experiment resulted in turn-taking in which the leader-follower relationship switched during determined sequences, accompanied by periodic shifts of ws. An analysis using transfer entropy found that the direction of information flow between the two agents also shifted along with turn-taking. Herein, we discuss qualitative differences between random/spontaneous turn-taking and agreed-upon sequential turn-taking by reviewing both synthetic and empirical studies.

Figure 1. Schematic of phase space structure indicating four distinct types of behavior coordination in dyadic robot interaction context.

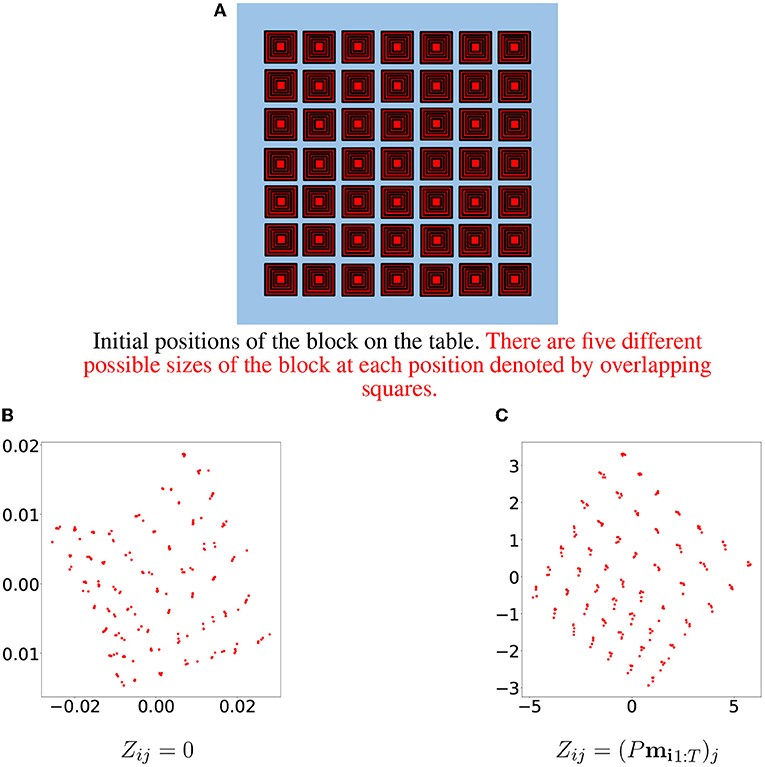

3.2 Initialization of latent space coordinates via random linear projections for learning robotic sensory-motor sequences

Robot kinematic data, despite being high-dimensional, is highly correlated, especially when considering motions grouped in certain primitives. These almost linear correlations within primitives allow us to interpret motions as points drawn close to a union of low-dimensional affine subspaces in the space of all motions. Motivated by results of embedding theory, in particular, generalizations of the Whitney embedding theorem, we show that random linear projection of motor sequences into low-dimensional space loses very little information about the structure of kinematic data. Projected points offer good initial estimates for values of latent variables in a generative model of robot sensory-motor behavior primitives. We conducted a series of experiments in which we trained a Recurrent Neural Network to generate sensory-motor sequences for a robotic manipulator with 9 degrees of freedom. Experimental results demonstrate substantial improvement in generalization abilities for unobserved samples during initialization of latent variables with a random linear projection of motor data over initialization with zero or random values. Moreover, latent space is well-structured such that samples belonging to different primitives are well separated from the onset of the training process.

Figure 2. Initial positions of the block are depicted in (A). Each position corresponds to five samples differing in block size. The first two principal components of the learned latent vectors for samples belonging to one specific primitive are depicted in (B) for the zero initialization method and in (C) for the random linear projections initialization method.

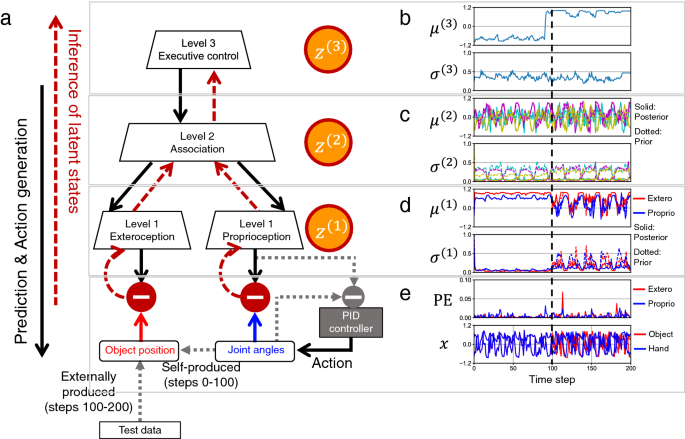

3.3 Emergence of sensory attenuation based upon the free-energy principle

The brain attenuates its responses to self‑produced exteroceptions (e.g., we cannot tickle ourselves). Is this phenomenon, known as sensory attenuation, enabled innately, or acquired through learning? Here, our simulation study using a multimodal hierarchical recurrent neural network model, based on variational free‑energy minimization, shows that a mechanism for sensory attenuation can develop through learning of two distinct types of sensorimotor experience, involving self‑produced or externally produced exteroceptions. For each sensorimotor context, a particular free‑energy state emerged through interaction between top‑down prediction with precision and bottom‑up sensory prediction error from each sensory area. The executive area in the network served as an information hub. Consequently, shifts between the two sensorimotor contexts triggered transitions from one free‑ energy state to another in the network via executive control, which caused shifts between attenuating and amplifying prediction‑error‑induced responses in the sensory areas. This study situates emergence of sensory attenuation (or self‑other distinction) in development of distinct free‑energy states in the dynamic hierarchical neural system.

Figure 3. Test setting and an example of the result. (a) Online inference of latent states and action generation by a PID controller, with synaptic weights fixed. (b) Executive-level posterior distribution. (c) Association-level posterior and prior distributions. (d) Sensory-level posterior and prior distributions in exteroceptive and proprioceptive areas. (e) Prediction error (PE) and real sensations for exteroception and proprioception. For clarity, proprioception is represented as the 2-dimensional hand position, although the actual proprioception comprises 3-dimensional joint angles.

3.4 Goal-directed Planning and Goal Understanding by Extended Active Inference: Evaluation Through Simulated and Physical Robot Experiments

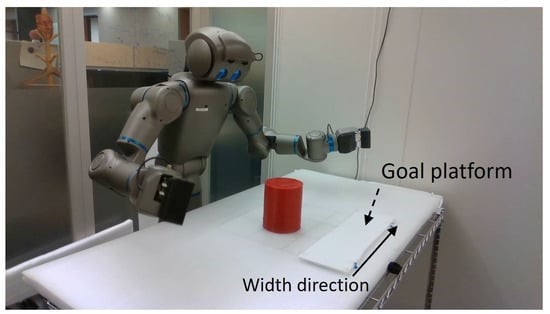

We show that goal-directed action planning and generation in a teleological framework can be formulated by extending the active inference framework. The proposed model, which is built on a variational recurrent neural network model, is characterized by three essential features. These are that (1) goals can be specified for both static sensory states, e.g., for goal images to be reached and dynamic processes, e.g., for moving around an object, (2) the model cannot only generate goal-directed action plans, but can also understand goals through sensory observation, and (3) the model generates future action plans for given goals based on the best estimate of the current state, inferred from past sensory observations. The proposed model is evaluated by conducting experiments on a simulated mobile agent as well as on a real humanoid robot performing object manipulation.

Figure 4. The Torobo humanoid robot, with the workspace, goal platform and object.

4. Publications

4.1 Journals

- Soda, T., Ahmadreza, A., Tani, J., Honda, M., Hanakawa, T., & Yamashita, Y. (2023). Simulating Developmental Diversity: Impact of Neural Stochasticity on Atypical Flexibility and Hierarchy. Accepted in Frontiers in Psychiatry, section Psychopathology.

- Wirkuttis, N., Ohata, W., & Tani, J. (2023). Turn-Taking Mechanisms in Imitative Interaction: Robotic Social Interaction Based on the Free Energy Principle. Entropy, 25(2), 263. LINK

- Nikulin, V., & Tani, J. (2022). Initialization of Latent Space Coordinates via Random Linear Projections for Learning Robotic Sensory-Motor Sequences. Frontiers in Neurorobotics, 16:891031. DOI: 10.3389/fnbot.2022.891031 LINK

- Idei, H., Ohata, W., Yamashita, Y., Ogata, T., & Tani, J. (2022). Emergence of sensory attenuation based upon the free-energy principle. Scientific Report, 12:14542, DOI: 10.1038/s41598-022-18207-7 PDF

- Matsumoto, T., Ohata, W., Benureau, F. C., & Tani, J. (2022). Goal-directed Planning and Goal Understanding by Extended Active Inference: Evaluation Through Simulated and Physical Robot Experiments. Entropy, 24(4), 469. LINK

4.2 Books and refereed conference proceedings

- Benureau, F. C., & Tani, J. (2022). Morphological Wobbling Can Help Robots Learn. 2022 IEEE International Conference on Development and Learning (ICDL). 257-264. PDF

- Han, D., Kozuno, T., Luo, X., Chen, Z. Y., Doya, K., Yang, Y., & Li, D. (2022). Variational oracle guiding for reinforcement learning. In International Conference on Learning Representations. PDF

4.3 Invited seminars and talks

- Invited talk, Tani, J. Exploring robotic minds by extending the framework of predictive coding and active inference. The Center for Robotics and Neural Systems (CRNS) (Online), University of Plymouth, UK, February 15, 2023.

- Invited talk, Tani, J. Emergence of higher-order cognitive mechanisms: a report from robotic experimental studies extending active inference. 製造科学技術センター、異分野技術シーズ「意見交換会」自由エネルギー原理で作動する認知脳ロボット (Online), January 17, 2023

- Invited talk, Tani, J. Goal-directed Planning and Goal Understanding of Robots by Extended Active Inference. RoboTac 2022, The IROS 2022 workshop, Kyoto, October 23, 2022.

- Invited talk, Tani, J. Lifelong Learning of High-level Cognitive and Reasoning Skills. IROS 2022 workshop, Kyoto, October 23, 2022.

- Invited talk, Tani, J. Cognitive Neurorobotics Studies Using the Frameworks of Predictive Coding and Active Inference. International Symposium on Artificial Intelligence and Brain Science 2022, Okinawa Institute of Science and Technology Graduate University, Okinawa, Japan, July 5, 2022. VIDEO

- Invited talk, Tani, J. Neurorobotics experiments on goal-directed planning based on active inference. The Fifth International Workshop on Intrinsically Motivated Open-ended Learning IMOL 2022 (Online), Max Planck Institute for Intelligent Systems, Tübingen, Germany, April 4-6, 2022.

5. Intellectual Property Rights and Other Specific Achievements

Nothing to report

6. Meetings and Events

6.1 Seminars

Towards Hierarchical Motor Control

- Date: Wednesday, December 7, 2022 - 16:00 to 16:45

- Venue: C209, Center Bldg. (Hybrid)

- Speaker: Dr. Steve Heim, Biomimetic Robotics Lab at MIT

6.2 Events

Workshop on Life Mind Continuity

- Date: Friday, November 25, 2022 - 13:00 to 17:35

- Venue: Meeting Room 1, Conference Center

- Speaker:

- Prof. Kazuo Okanoya, Teikyo University

- Prof. Tom Froese, OIST

- Prof. Takashi Ikegami, University of Tokyo

- Prof. Shigeru Kitazawa, Osaka University

- Prof. Jun Tani, OIST

7. Other

Nothing to report.