Realtime Social Interactions

Real-time social interaction is a crucial part of our emotional and cognitive life. We are irreducibly social animals: relationships allow us to accomplish things we could never do on our own, give our life meaning and ensure our well-being. However, this reliance on others might go even deeper. It has been suggested that some of our individual cognitive achievements would not even be possible without social interactions we come to rely on. In a set of smaller projects conducted within our unit, we explore how real-time social interaction is realized in our brains and bodies, using cognitive neuroscience methodology, and we probe the minimal requirements for interactions to transform individual cognition, using computational simulations and swarm robotics approach.

Video Interactions

Recent evidence suggests that when two people are interacting (playing a card game, singing, having a conversation), their bodies and brains synchronize in significant ways: their movements, heart rates and even brain waves converge on a common rhythm. Given that this is often associated with interactions that are more successful and pleasant, it seems that synchronization is a core component of real-time human interaction. However, how it is realized exactly and how it may be affected by different ways in which we can interact is still poorly understood. This question is addressed in a number of projects in our Unit. Some focus on minimal models of synchrony and minimal real-time haptic interactions. In the Video Interactions project, we aim to tackle a higher-level phenomenon of how digital modes of interaction (such as video chat) affects our inter-body and inter-brain synchrony: a question made particularly relevant given the recent experiences of a world-wide pandemic.

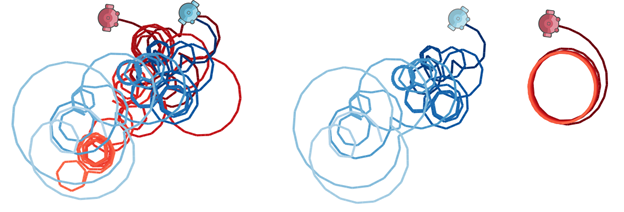

Dyadic Interactions Simulations

A number of models have investigated the role of social interaction in neural and behavioral complexity of agents by implementing minimal cognitive agent-based simulations that follow an evolutionary robotics methodology. For instance, previous studies have demonstrated that social interaction increases the complexity of an agent’s neural activity beyond what can be achieved in isolation.

Our aim is to extend these studies to better understand how it is that social interaction makes a difference and transforms the agents’ neural and behavioral complexity under different conditions. This has been achieved by evolving agents in pair interaction, ghost partner condition (one of the agents is playing back pre-recorded behavior) and isolation, and by varying the number of neurons in the neural architecture of the agents. In order to compare these conditions, we have analyzed the data using statistical analysis, dynamical systems analysis, and exploring different metrics of complexity (typically based on entropy). For example, in our more recent study, we have shown that smaller-brained agents in social conditions are able to produce neural activity comparable in complexity to larger-brained agents in solitary conditions.